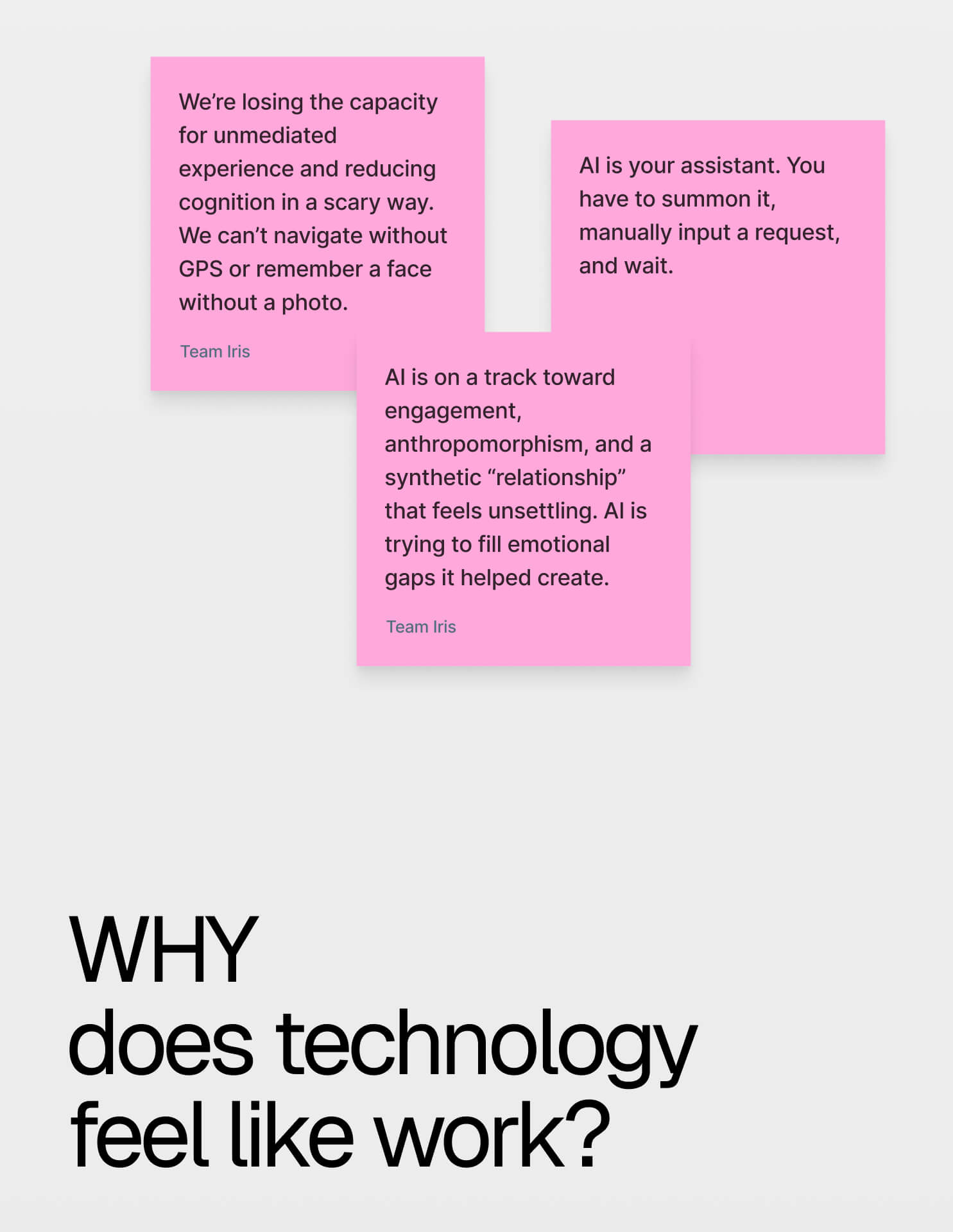

Early in the hackathon, our team explored a mobile app concept proposed by the business strategists to help musicians match, meet, and play together. However, the idea still relied on traditional patterns of the screen-based interface.

At that point, I proposed reframing the problem entirely. Instead of designing another app or dashboard, I asked a different question:

What if intelligence could live around you rather than inside your phone?

That shift led to the core vision behind Iris - an agentic AI system that operates as an ambient interface layer through smart glasses, delivering contextual assistance without requiring users to constantly return to a screen.

.jpg)

%20(1).jpg)

%20(1).jpg)

%20(1).jpg)

.svg)